Even the errors are broken!

An amused but slightly exasperated developer once turned to me and said “I not only have to get all the features correct, I have to get the errors correct too!”. He was referring to the need to implement graceful and useful failure behaviour for his application.

Rather than present the customer or user with an error message or stack trace - give them a route to succeed in their goal. E.g. Find the product they seek or even buy it.

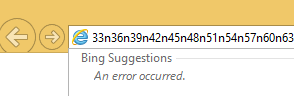

Bing Suggestions demonstrates ungraceful failure.

Graceful failure can take several forms, take a look at this Bing [search] Suggestions bug in Internet Explorer 11.

As you can see, the user is presented with a useful feature, most of the time. But should they paste a long URL into the location bar - They get hit with an error message.

There are multiple issues here. What else is allowing this to happen to the user? The user is presented with an error message - Why? What could the user possibly do with it? Bing Suggestions does not fail gracefully.

I not only have to get all the features correct, I have to get the errors correct too! -Developer

In this context, presenting the user with an error message is a bug, probably worse than the fact the suggestions themselves don’t work. If they silently failed - the number users who were consciously affected would probably be greatly reduced.

By causing the software to fail, we often appear to be destructive, but again we are learning more about the application, through its failure. Handling failures gracefully is another feature of the software that is important to real users - in the real world. The user wants to use your product to achieve their goal. They don’t want to see every warning light that displays in the pilot’s cockpit. Just tell them if they need to put their seat-belt on.