Into the testing hinterland.

Why do we refer to our ancestors as Cavemen? The evidence of course! The cave paintings, the rubbish piles found in caves all round the world. It’s simple, Cavemen lived in caves, they painted on the walls and threw rubbish into the corner of the cave. Thousands of years later we find the evidence, demonstrating they lived in caves. Hence the moniker ‘caveman’.

How many caves have you seen? Seriously, How many have you seen or even heard of? Now I’m lucky, as former resident of Nottingham [in the UK], I’ve at least heard of a few. But if you think about it, you probably haven’t seen that many. Even assuming you’ve seen a fair-few, how many were dry, spacious and safe enough for human habitation? As you can guess, my point is: there probably isn’t a great selection of prime cave real-estate available.

It doesn’t add up: The whole of mankind descended from cave [dwelling] men? Before you roll your eyes, and think I’m some sort of Creationist, think again about the above assumptions. There is a simple answer - our ancestors didn’t all live in caves. They probably lived in many places, and environments. They had the tools and skill to hunt and kill animals. A simple shelter made from animal skins and branches probably wasn’t beyond their means. The difference with these more temporary homes is they wouldn’t be around in 10,000 years. The paintings on the inside of the make-shift shelters would rot or wash away just a few years later. This sample bias leaves us with only the evidence left tucked away deep in caves away from the elements and later inhabitants. When we now characterise our ancestors as cave-men, we are basing our assumptions on a strongly biased sample.

Now let’s imagine I’m testing a large and complicated computer system. It has many thousands of lines of code. It’s been built over several years. The software has probably had multiple authors, testers, business analysts and other interested parties adding and removing bugs over time. The system probably consists different hardware and operating systems handling different parts of the system. As such, there is a very large test space - a lot could go wrong.

The developers catch some bugs with their unit tests. They probably do some ‘manual’ tests and find more issues. The testers take a look, find a bunch more issues. The testers run their automated checks - they pick a couple more issues. We’re building up a picture of what’s broken.

But how good is our picture of application?

This is a bit like asking - How is our sample biased? what parts of the scene ‘can I see’ and therefore - draw and explain to my customers. I’m limited, I can only interact with the system in certain ways. The range of inputs I can give the system is limited to what it will accept through defined interfaces. The information I can extract from the system is also limited. These limits are not just due to my tools e.g.: Logs, tools, debugger etc, but also time. I don’t have the time to examine the whole system. Further to these physical constraints, I can only conceive of some subset of the potential tests. My own cognitive biases prevent me from attempting a larger selection of tests, I don’t even think to try to perform many of the possible tests.

These limitations result in a vast system area of the test-space being unexamined. It’s worse than that, we have examined a small part of the system, but we don’t know how representative our sample of the issues and successes is. For all we know the remainder of the system is gravely flawed or even bug-free!

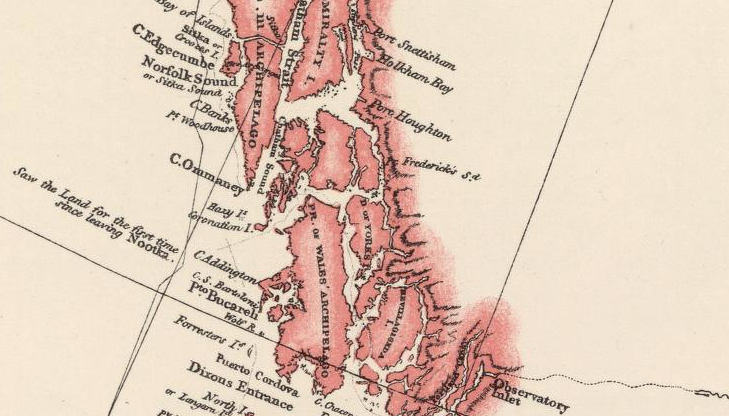

Our picture is probably best described as a map. A map where the easy to access areas are detailed and more remote areas are sparsely drawn and devoid of detail. Similar to how early explorers mapped coastlines and rivers with the elaborate minutia of what they could see, but left vast areas of the interior uncharted. The bugs we find do not accurately represent the whole terrain, but rather just a visible fraction of the whole landscape.

In summary, the systems we create and try to test are, to the most part, unexplored. We need to find new and better ways to venture into the hinterland of complexity and hidden problems. We must find the means to see further and not blind our-selves to the problems in our software.